Nodejs vs Play for Front-End Apps

Friday, March 25, 2011

I’m resuccitating this old artile to support some inbound traffic.

Mar 29, 2011: The source used for these tests is now available at https://github.com/s3u/ebay-srp-nodejs and https://github.com/s3u/ebay-srp-play.

Mar 27, 2011: I updated the charts based on new runs and some feedback. If you have any tips for improving numbers for either Nodejs or Play, please leave a comment, and I will rerun the tests.

We often see “hello world” style apps used for benchmarking servers. A “hello world” app can produce low-latency responses under several thousands of concurrent connections, but such tests do not help make choices for building real world apps. Here is a test I did at eBay recently comparing a front-end app built using two different stacks:

- nodejs (version 0.4.3) as the HTTP server, using Express (with NODE_ENV=production) as the web framework with EJS templates and cluster for launching node instances (cluster launches 8 instances of nodejs for the machine I used for testing)

- Play framework (version 1.1.1) as the web framework in production mode on Java 1.6.0_20.

The intent behind my choice of the Play framework is to pick up a stack that uses rails-style controller and view templates for front-end apps, but runs on the JVM. The Java-land is littered with a large number of complex legacy frameworks that don’t even get HTTP right, but I found Play easy to work with. I spent nearly equal amounts of time (under two hours) to build the same app on nodejs and Play.

The test app is purpose built. It includes a single search results page that renders search results fetched from a backend source. The flow is simple — the user submits some text, the front-end fires off a request to the backend, the backend responds with JSON, the front end parses it, and renders the results using a set of HTML templates. The idea of this app is to represent front-end apps that produce markup with/without backend IO.

In my test setup, the average result from backend is about 150k — it is JSON formatted and not compressed. The results page consists of 8 templates — each for different parts of the page like header, footer, sidebar etc. The sizes of the template files range from 250 bytes to under 2k. In order to ensure that backend latency does not influence testing, search requests are proxied through Apache Traffic Server acting as a forward proxy. The cache is tuned to always generate a hit. Such a high cache hit is not realistic, but it helped me isolate the cost of having to go through uncontrolled public Internet to get search results for my testing.

Note that the test environment is not the most ideal — the test client, the server, and the cache

were all running on the same box. The box is a quad-core Xeon with 12GB of RAM running Fedora 14 (2.6.35.6–45.fc14.x86_64 kernel).

I ran the tests using ab.

ab -k -c 300 -n 200000 {URI}

The tests include the following configurations

- Render — No IO: Render the page without any IO — this configuration generates HTML from the templates with empty results.

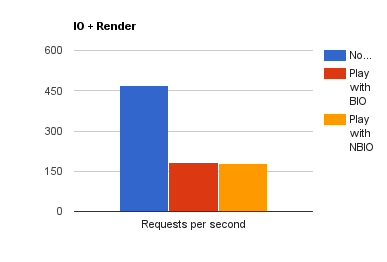

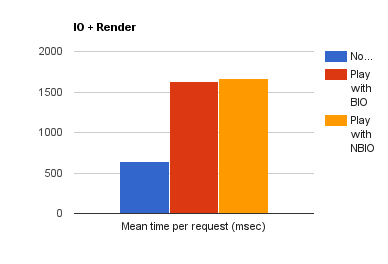

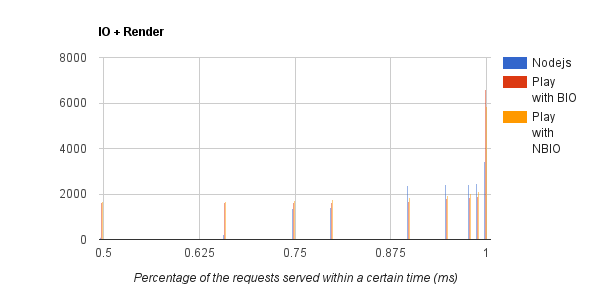

- IO + Render: Render the page with results.

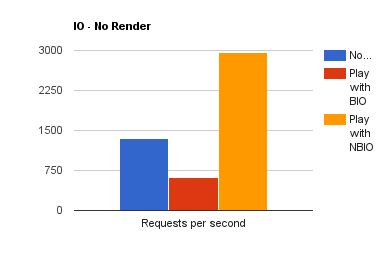

- IO — No Render: Fetch results but don’t render — this is an unrealistic case, but it helps highlight the cost of IO vs cost of template processing.

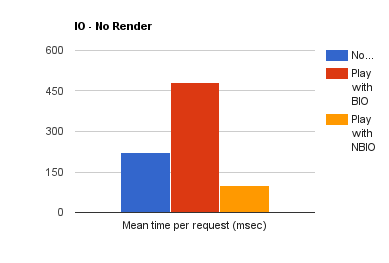

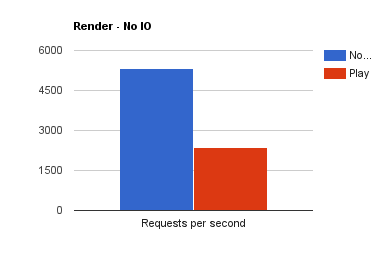

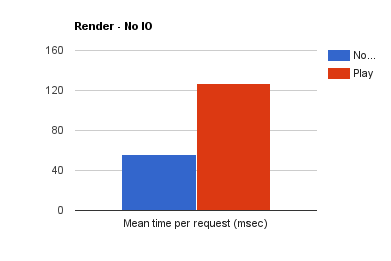

The charts below show requests per second and mean response time.

From these, you can see that Nodejs beats Play on performance as well as throughput. However, in the pure IO case, I would not discount non-blocking IO on the JVM. I plan to post more results dealing with IO + computation scenarios.

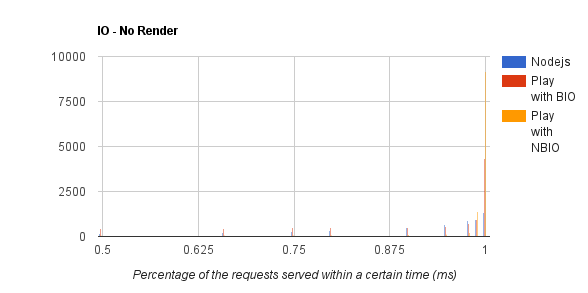

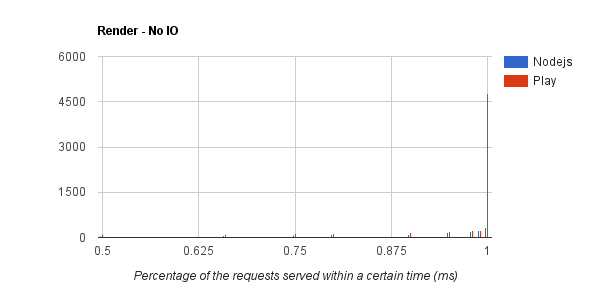

The charts below show the percentage of requests completed within a certain amount of time in msec. The shorter the bars the better. Also less variance as you read from left to right on each chart is better — I would ignore the last set of bars on the right (time to complete 100% of the requests) as they may contain outliers.

When the workload involves generating HTML from templates off the file system without performing any other IO, nodejs does twice better than JVM based Play. As we introduce IO, performance across the board suffers, but more so with blocking IO on Play. But Play is able to catchup with non-blocking IO (via continuations).

I’m unable to make the source code for the test apps available publicly at this time. But I plan to create and post some new tests on github soon.